Supply is All You Need

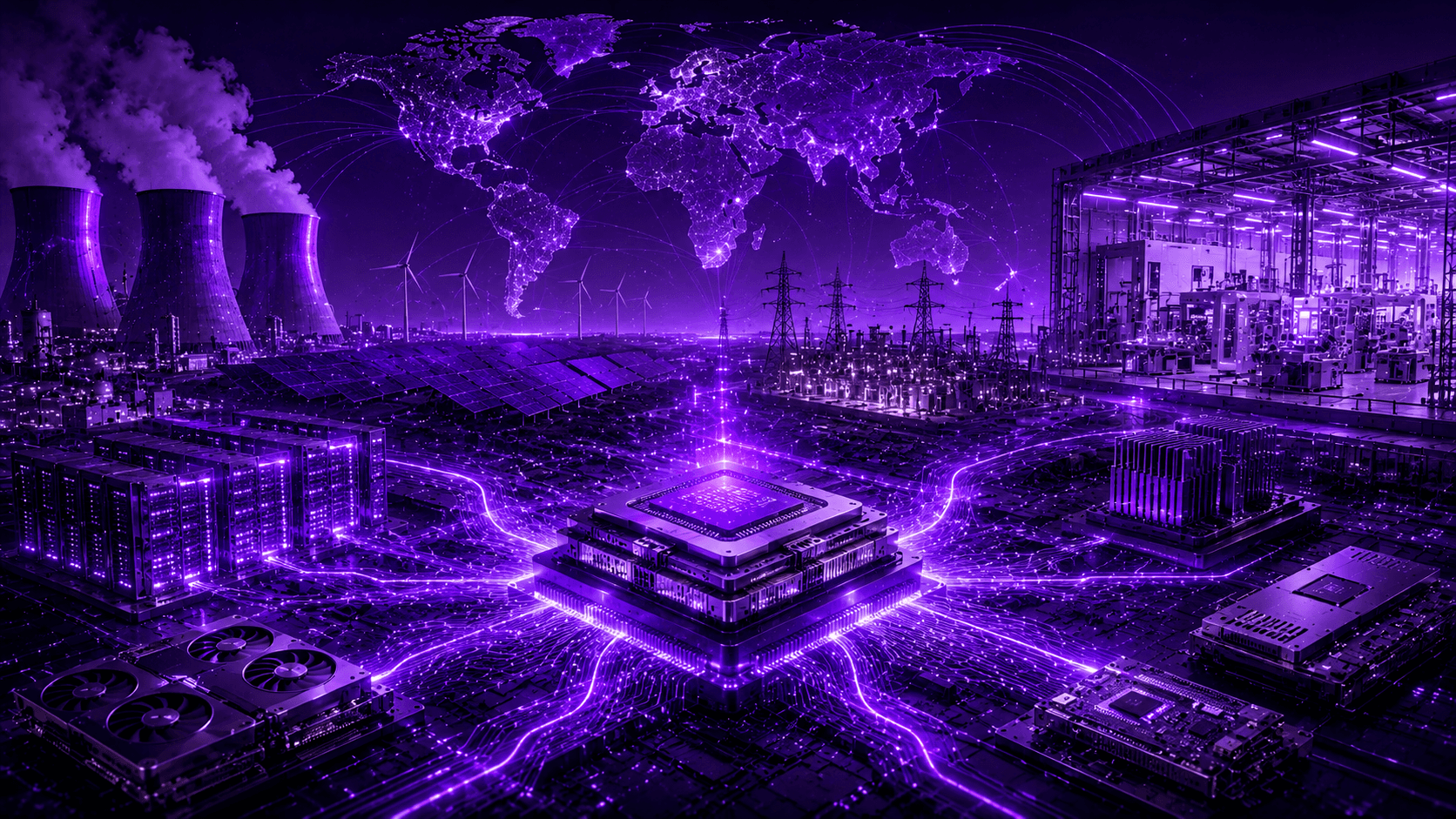

The AI industry is addicted to software hype. We’re constantly sold the fantasy that algorithmic breakthroughs and trillions of parameters will magically solve enterprise problems. It's time to wake up to physical reality: AI progress is no longer constrained by math. It is constrained by memory bandwidth, electrical grids, advanced packaging, and a hyper-fragile, monopolized supply chain.

Executive Summary: The Physics Wall

For the last decade, AI progress was a software game measured in parameter counts and dataset sizes. Today, the limiting factor is industrial infrastructure. The industry has consolidated around a dangerously small number of fabrication facilities, equipment vendors, and hyperscale cloud providers.

The monolithic AI scaling strategy is dead. If your enterprise AI strategy relies entirely on acquiring single-vendor hardware manufactured by a single fab in a geopolitical hot zone, you aren't building a moat—you're building a glass house.

The Real Bottlenecks: Memory and Packaging

Look past the raw compute marketing nonsense. Modern AI accelerators aren't bottlenecked by arithmetic capability; they are starving for memory throughput.

High Bandwidth Memory (HBM3E, and soon HBM4) is the true gatekeeper of AI performance. But slapping more HBM onto a processor requires advanced packaging like TSMC's CoWoS. This is the industry's dirty secret: it doesn't matter how many GPU dies you can print if you don't have the packaging capacity to wire them to memory. TSMC’s packaging capacity is notoriously constrained, creating a massive, hidden chokehold on the entire industry.

The Infrastructure Nightmare: Power and Cooling

AI systems are fundamentally constrained by the laws of thermodynamics. We are moving from megawatt deployments to gigawatt-scale energy planning. Hyperscalers are literally exploring nuclear partnerships because data-center power demand is accelerating faster than global grid expansion.

Add to this the brutal reality of cooling. Thermal density is skyrocketing. We are hitting the limits of air and liquid cooling, draining regional water tables, and fighting over transformer shortages. Math is infinite; copper, water, and electricity are not.

As we have pointed out in previous posts, much of this death spiral is due to marketing hype pushing for higher and higher tokens per second and other metrics that do not translate to enterprise value.

The Monopolized Supply Chain

The entire advanced AI semiconductor market balances on the edge of a knife.

ASML: Effectively monopolizes the Extreme Ultraviolet (EUV) lithography machines required for sub-5nm fabrication.

TSMC: Controls the vast majority of advanced-node manufacturing and advanced packaging.

The Hyperscalers: Lock developers into proprietary software ecosystems designed to reinforce hardware monopolies.

This isn't an ecosystem that can continue to scale...

Government Interference and Silicon Nationalism

And just when the supply chain couldn't get any more brittle, governments have entered the chat.

Hardware is now a weapon of geopolitical warfare. We are seeing unprecedented government interference in the silicon supply chain through aggressive export controls, sweeping tariffs, and "silicon nationalism."

If you are locked into a single hardware architecture, your entire operational budget is at the mercy of arbitrary trade policies. A single tariff hike on imported silicon or a sudden export ban on advanced accelerators can instantly obliterate your unit economics. You can't code your way out of a federal embargo.

The I/ONX Reality: Heterogeneous Compute is Survival

History shows us what happens to monopolized infrastructure—whether it's railroads, telecom, or energy grids. Eventually, the bottleneck strangles the market until diversification breaks the monopoly open.

We are reaching the limits of the centralized, single-stack AI infrastructure. The future belongs to heterogeneous compute.

At I/ONX, we don't bet on a single hardware vendor. We don't care if the underlying silicon is a GPU, an ASIC, an FPGA, or an emerging accelerator. Our approach to the layers—the hardware, the orchestration, and the agent harness—is designed to dynamically allocate workloads across diversified hardware, isolating our customers from vendor lock-in, supply chain shocks, and tariff spikes.

The industry wants you to blindly join the waitlist for the next generation of monopolized silicon. We built a platform that bypasses the waitlist entirely. The harness is the product; everything else is just raw material.

Blog Home